nice info gan

Cardalonia Pre-Sale is Live (How To Participate) 17.2K. 3.4K

Sejarah Perang Dunia Shinobi dan Lahirnya Para Legenda Shinobi Nice

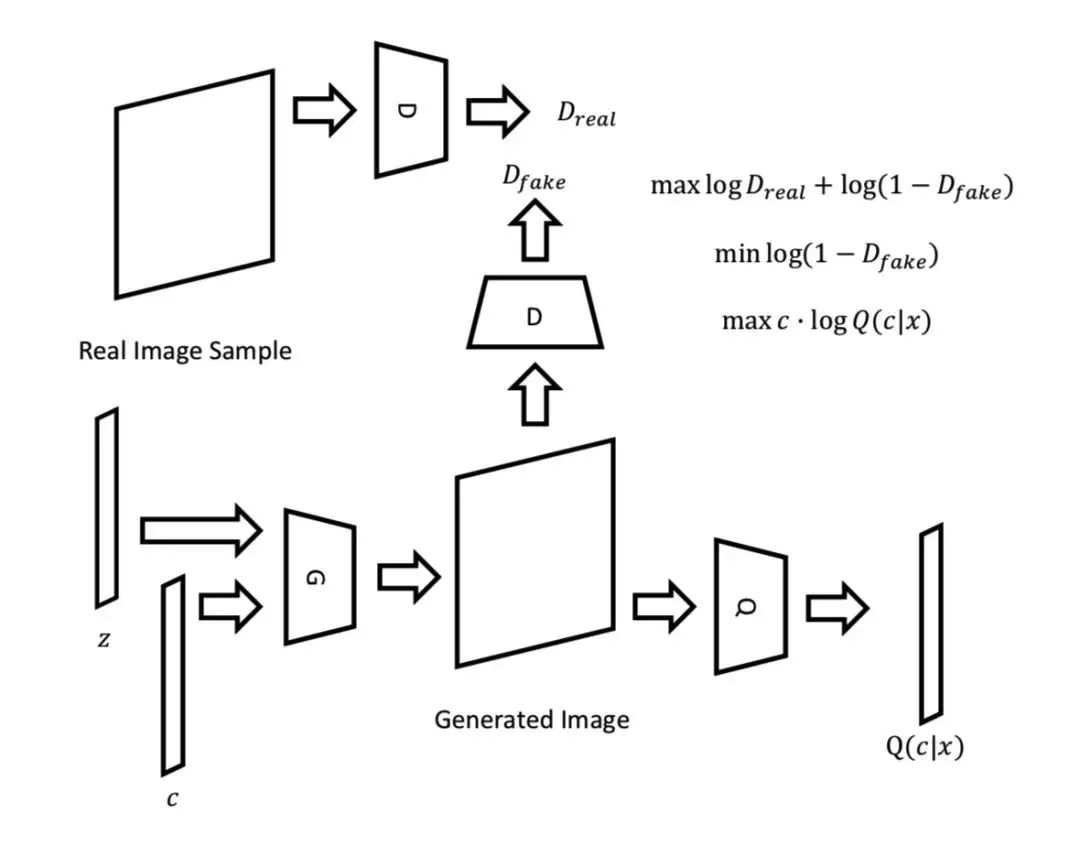

The approach is motivated from the information-theoretic point of view and is based on minimizing the mutual information between the latent codes and the generated images.. For completeness it would be nice to have V(D,G) formulated in terms of x,c,z: it is not 100% clear from equation (1).. However, in pure GAN the learning algorithm.

GAN Lecture 7 (2018) Info GAN, VAEGAN, BiGAN YouTube

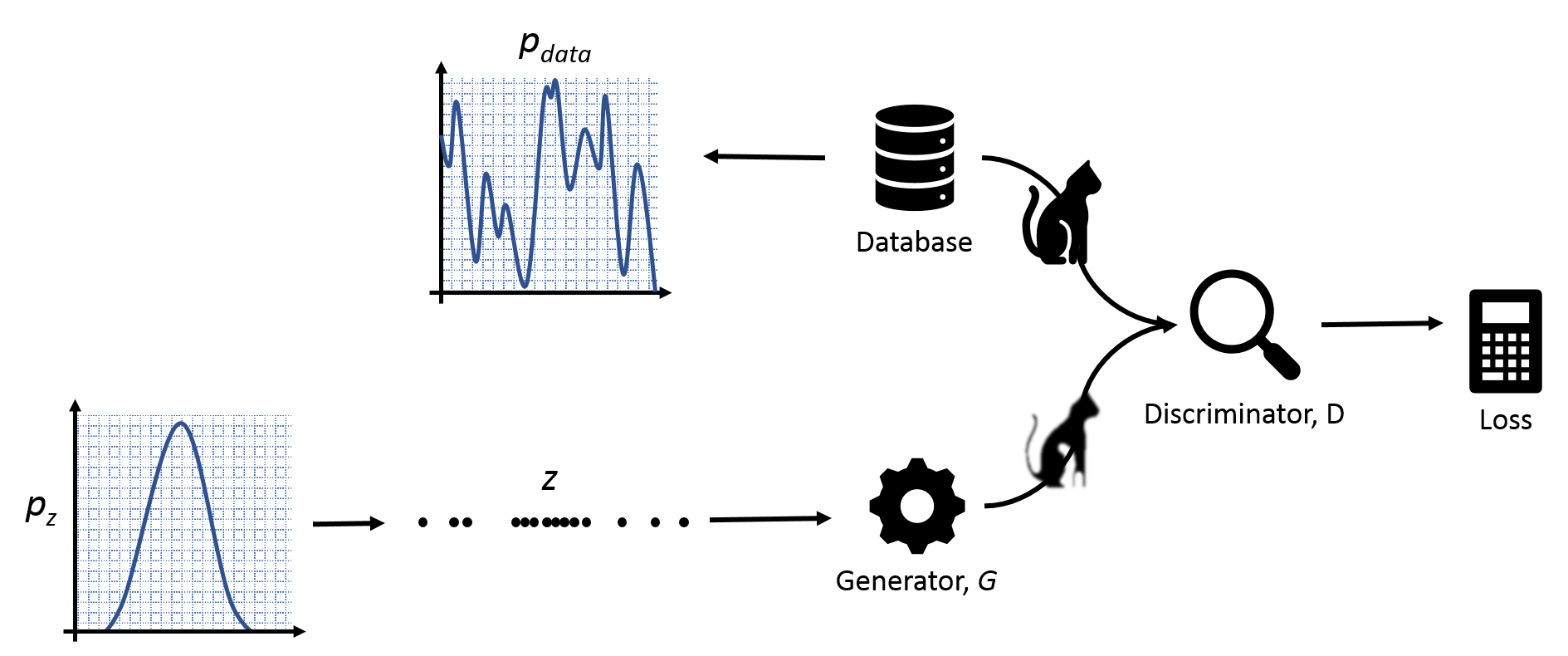

Claude Shannon's 1948 paper defined the amount of information which can be transferred in a noisy channel in terms of power and bandwidth. A new research study conducted by Xi Chen and team proposes a GAN-styled neural network which uses information theory to learn "disentangled representations" in an unsupervised manner.

NICEGANpytorch/main.py at master · alpc91/NICEGANpytorch · GitHub

The main issue in NICE-GAN is the coupling of translation with discrimination along the encoder, which could incur training inconsistency when we play the min-max game via GAN. To tackle this issue, we develop a decoupled training strategy by which the encoder is only trained when maximizing the adversary loss while keeping frozen otherwise.

👉Ctrl+R on Twitter "RT erigostore Wah baru tau. Nice info gan 👍"

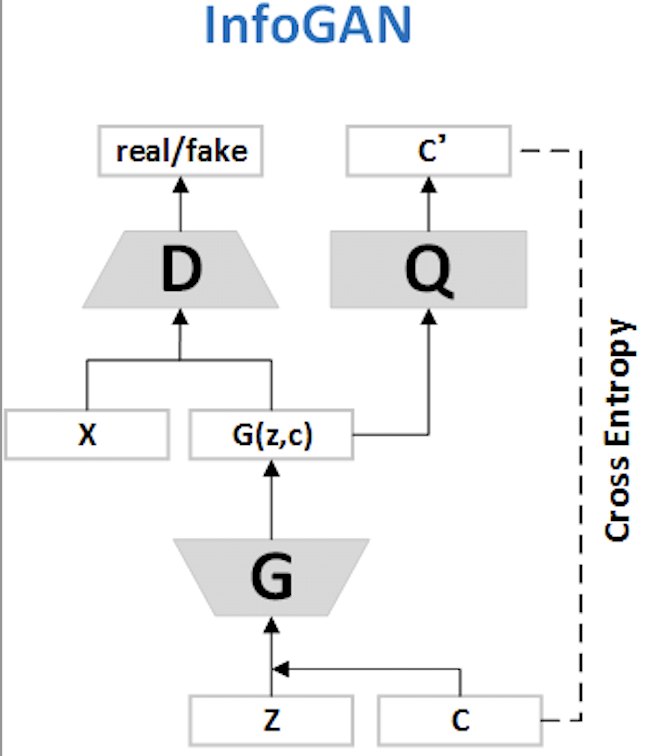

The Structure of InfoGAN A normal GAN has two fundamental elements: a generator that accepts random noises and produces fake images, and a discriminator that accepts both fake and real images and identifies if the image is real or fake.

Dean nice info gan😂 emng istrinya ga marah guys?🤣🤣 tiktok

Code for reproducing key results in the paper "InfoGAN: Interpretable Representation Learning by Information Maximizing Generative Adversarial Nets" - GitHub - openai/InfoGAN: Code for reproducing key results in the paper "InfoGAN: Interpretable Representation Learning by Information Maximizing Generative Adversarial Nets"

infoGAN :从混沌中分离有序 知乎

Generative Adversarial Networks, or GANs, are deep learning architecture generative models that have seen wide success. There are thousands of papers on GANs and many hundreds of named-GANs, that is, models with a defined name that often includes "GAN", such as DCGAN, as opposed to a minor extension to the method.Given the vast size of the GAN literature and number of models, it can be, at.

clowningweeb on Twitter "nice info gan 👍🏻…

InfoGAN is a type of generative adversarial network that modifies the GAN objective to encourage it to learn interpretable and meaningful representations. This is done by maximizing the mutual information between a fixed small subset of the GAN's noise variables and the observations.

Stream gungbaster5 music Listen to songs, albums, playlists for free

Mutual Information. InfoGAN stands for information maximizing GAN. To maximize information, InfoGAN uses mutual information. In information theory, the mutual information between X and Y, I(X; Y ), measures the "amount of information" learned from knowledge of random variable Y about the other random variable X.

GitHub LJSthu/infoGAN Implementation InfoGAN in pytorch

InfoGAN is designed to maximize the mutual information between a small subset of the latent variables and the observation. A lower bound of the mutual information objective is derived that can.

How to Develop an Information Maximizing GAN (InfoGAN) in Keras

generator becomes G (z;c ). However, in standard GAN, the generator is free to ignore the additional latent code c by nding a solution satisfying P G (x jc) = P G (x ). To cope with the problem of trivial codes, we propose an information-theoretic regularization: there should be high mutual information

Week 1 Memes

The 4-Port USB-C GaN Wall Charger declutters the workspace while charging laptops, tablets, phones, smart watches and earbuds simultaneously - no extra power bricks needed. It is compact and.

InfoGAN Interpretable Representation Learning by Information

For your daily information

Creating Videos with Neural Networks using GAN YouTube

InfoGAN, short for Information Maximizing Generative Adversarial Networks, is a powerful machine learning technique that extends the capabilities of traditional Generative Adversarial Networks (GANs). While GANs are known for generating high-quality synthetic data, they lack control over the specific features of the generated samples.

Deep Learning 42 (3) TensorFlow Implementation of Info GAN YouTube

Generative models. This post describes four projects that share a common theme of enhancing or using generative models, a branch of unsupervised learning techniques in machine learning. In addition to describing our work, this post will tell you a bit more about generative models: what they are, why they are important, and where they might be.

Generative Adversarial Network (GAN) in TensorFlow Part 1 · Machine

1. Introduction. InfoGAN was introduced by Chen et al.[1] in 2016. On top of GAN having a generator and a discriminator, there is an auxiliary network called the Q-network.